|

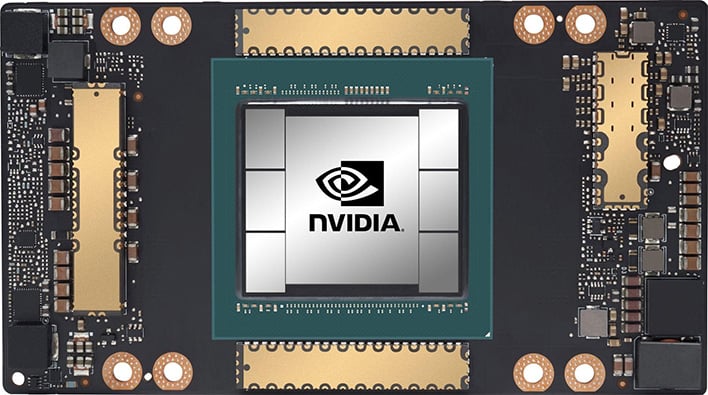

So far A100 GPU system beats all offers available. The new A100 GPU is average 1.5 to 2.5 times faster compared to V100. The baseline for the results was the previous generation king, V100 Volta GPU. But how well does the A100 perform on non-AI benchmarks, and can we expect the A100 to deliver the application improvements we have grown used to with previous GPU generations? In this paper, we benchmark the A100 GPU and compare it to four previous generations of GPUs, with particular focus on empirically quantifying our derived performance expectations, and - should those expectations be undelivered - investigate whether the introduced data-movement features can offset any eventual loss in performance? We find that the A100 delivers less performance increase than previous generations for the well-known Rodinia benchmark suite we show that some of these performance anomalies can be remedied through clever use of the new data-movement features, which we microbenchmark and demonstrate where (and more importantly, how) they should be used. 1.89/H100/hr - on fully optimized, high performance, architecture for model training with NVIDIA H100 SXM5 GPUs (NVLink) and 3200Gbps non blocking IB. The A100 scored 446 points on OctaneBench, thus claiming the title of fastest GPU to ever grace the benchmark. The A100 GPU got benchmarked in the latest 0.7 version of the benchmark. The H100 is a cutting-edge GPU, meant to scale a wide range of workloads, supporting both exascale high-performance computing (HPC) and trillion-parameter AI applications as well as small enterprises. Reproduce these results on your system by following the instructions in the Measuring Training and Inferencing Performance on NVIDIA AI Platforms Reviewers. Recently, Nvidia introduced its 9th generation HPC-grade GPUs, the Ampere 100, claiming significant performance improvements over previous generations, particularly for AI-workloads, as well as introducing new architectural features such as asynchronous data movement. About H100: A Data Center with Excellent Performance, Scalability, and Security. We have performed some benchmarking of the NVIDIA A100 GPUs on Azure using these new VM SKUs and provided the results in the data tables below.

The HPC-Benchmark landing page includes detailed instructions and additional resources. To checkout these improvements, download the latest NVIDIA HPC-Benchmark container, version 21.4, from NGC.

For many, Graphics Processing Units (GPUs) provides a source of reliable computing power. Benchmarks are commonly used to validate just how far you can push a computing resource while still maintaining confidence in the processing outcome. The plot below shows that with 128 DGX A100s, or 1024 NVIDIA A100 GPUs, the latest release of HPL-AI performs 2.6 times faster.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed